MPs are learning how it feels to be a Gorton & Denton resident today. A newspaper packed full of dodgy … More

Category: Regulation

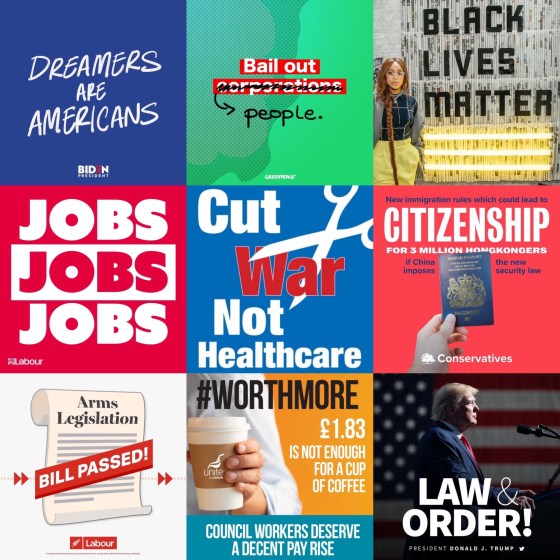

Election advertising regulation: it’s time for a change

Election advertising in the UK is barely regulated. That might come as a shock to many voters, given the role … More

The threat of generative AI to our democracy

Generative AI is already having a profound and lasting effect on the advertising industry and political advertising is by no … More

Misleading election ads during the Super Thursday campaign

It’s my strong belief that electoral advertising should be regulated so that fact-based claims are accurate. At the moment there … More

Facebook users can now block political ads from their feeds

The next political ad you see on Facebook could be your last: the social media giant is going to allow … More

The key player in UK advertising regulation calls for political ad reform

In today’s Guardian the CEO of the UK Advertising Standards Authority (ASA) announced his organisation’s support for the idea that … More

Twitter’s ban on political ads is bad for democracy

It’s a huge shame that Twitter have decided to stop accepting political advertising on their platform. It is understandable that … More

Truth and politics

Last night I spoke at an event called Truth and Politics, hosted by The Media Club which is run by … More

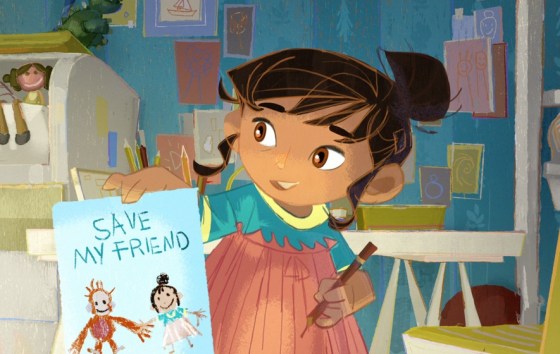

Let’s separate the real from the make believe

The Coalition for Reform in Political Advertising have launched a new campaign – “separate the real from the make believe” … More

Iceland Xmas ad shows problem with regulations that are frozen in time

The new Iceland Christmas advert uses brilliant and emotive storytelling to highlight the damage that palm oil manufacturing can have … More